Author: Associate Vice President, Analytics and Data Strategy, Quantzig.

Table of Contents

Driving Business Success with Data Quality Monitoring

A Fortune 500 company struggled with inaccurate and inconsistent data, leading to inefficient marketing campaigns, poor customer engagement, and flawed financial decision-making. To address these challenges, they partnered with Quantzig to implement a robust Data Quality Monitoring framework that ensured data accuracy, reliability, and completeness. This transformation enabled the company to enhance decision-making, streamline operations, and improve customer satisfaction.

Key Highlights

| Key Metrics | Impact |

|---|---|

| Enhanced Data Accuracy | Improved decision-making across departments |

| Streamlined Marketing Efforts | Increased campaign effectiveness and ROI |

| Improved Customer Satisfaction | Personalized engagement and reduced churn |

| Strengthened Financial Reporting | Reliable insights for strategic planning |

Book a demo to experience the meaningful insights we derive from data through our analytical tools and platform capabilities.

Request a DemoChallenges in Maintaining Data Integrity

A leading Fortune 500 company faced significant challenges due to poor data quality, affecting their marketing, customer engagement, and financial reporting.

| Business Challenges | Impact |

| Inconsistent and inaccurate customer data | Ineffective marketing campaigns and reduced engagement |

| Fragmented financial data | Poor decision-making and compliance risks |

| Lack of real-time data validation | Increased operational inefficiencies |

| Absence of standardized data governance | Data silos and reporting discrepancies |

To overcome these challenges, the company sought a comprehensive Data Quality Monitoring solution to enhance data reliability and operational efficiency.

Achieving Data Excellence with Clear Objectives

To drive meaningful business outcomes, organizations must establish clear objectives that align data quality efforts with operational goals. By focusing on data accuracy, operational efficiency, customer engagement, and governance, companies can transform raw data into a strategic asset. Quantzig’s Data Quality Monitoring framework ensures that businesses maintain high-quality, reliable data to enhance decision-making and optimize performance.

| Objectives | Details |

| Improve Data Accuracy | Ensure high-quality, error-free data for business insights |

| Enhance Operational Efficiency | Streamline processes and reduce manual data correction efforts |

| Strengthen Customer Engagement | Leverage accurate data for targeted marketing strategies |

| Enable Data Governance | Implement standardized protocols for consistent data management |

How Quantzig Transformed Data Quality Management

Quantzig implemented an end-to-end Data Quality Monitoring framework, leveraging advanced analytics and automation to enhance data accuracy and integrity. Our approach included:

- Data Profiling & Cleansing – Identified and rectified inconsistencies in existing datasets.

- Automated Data Validation – Implemented real-time monitoring tools to detect anomalies.

- Standardized Data Governance – Established data governance protocols to maintain uniformity.

- Advanced Analytics Integration – Used AI-driven models to optimize data workflows.

- Ongoing Monitoring & Reporting – Provided continuous insights to prevent future data discrepancies.

Technology Used

| Tech Stack | Application |

| AI-Driven Data Validation Tools | Real-time anomaly detection and data cleansing |

| Cloud-Based Data Repositories | Centralized and structured data storage |

| Machine Learning Models | Predictive analytics for proactive quality management |

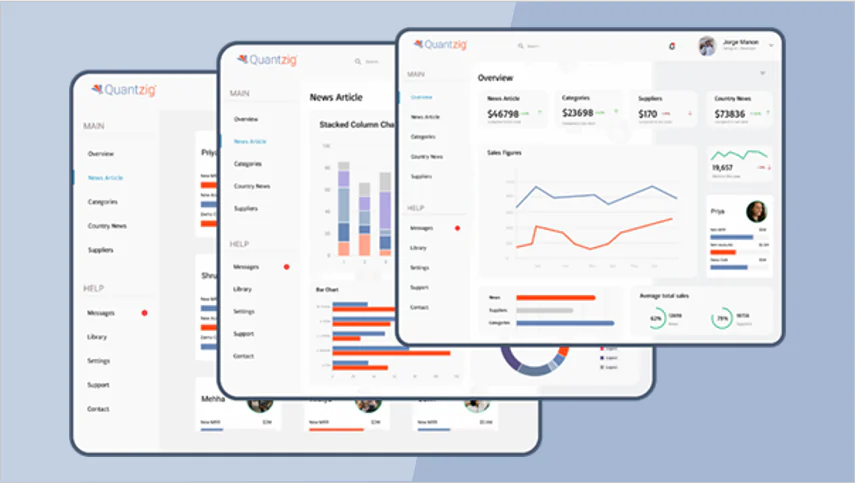

| Business Intelligence Dashboards | Interactive reports for decision-making |

Quantifying the Impact of Data Quality Monitoring

Implementing a Data Quality Monitoring framework has significantly transformed business operations, enabling higher data accuracy, better customer retention, and improved financial consistency. By addressing data inconsistencies and enhancing governance, the company achieved greater efficiency, personalized engagement, and strategic decision-making. The measurable improvements in key performance indicators demonstrate the profound impact of high-quality data on overall business success.

| Metric | Before | After | Improvement |

| Data Accuracy | 68% | 95% | +40% Increase |

| Marketing Campaign Effectiveness | 55% | 80% | +45% Growth |

| Customer Retention Rate | 62% | 85% | +37% Increase |

| Financial Data Consistency | 70% | 98% | +40% Improvement |

Qualitative Impact

| Impact Areas | Benefits |

| Enhanced Decision-Making | Reliable data for strategic planning |

| Increased Customer Engagement | Personalized marketing campaigns |

| Operational Efficiency | Reduced manual intervention and faster processes |

| Compliance & Risk Management | Improved adherence to regulatory standards |

How Quantzig Can Help?

With over 20+ years of expertise, Quantzig has been a trusted partner in Data Quality Monitoring, helping businesses optimize operations and unlock the full potential of their data.

Quantzig's Expertise:

-

Advanced Data Quality Frameworks

Improved accuracy and reliability

-

AI-Driven Monitoring Solutions

Real-time insights and anomaly detection

-

Customizable Governance Strategies

Scalable solutions for diverse industries

Get started with your complimentary trial today and delve into our platform without any obligations. Explore our wide range of customized, consumption driven analytical solutions services built across the analytical maturity levels.

Start your Trial Today